| Artificial Intelligence (AI) systems are increasingly developed and deployed for purposes related to migration, asylum and border control in a manner that risks fundamental rights. From purported “lie detectors” to predictive analytics tools, EU migration policies are more and more underpinned by the use of AI which the EU AI Act seeks to regulate. However, in its original proposal, the EU AI Act fails to address the impacts that AI systems have on people on the move and non-EU citizens. A group of civil society organisations has been advocating for an amendment of the AI Act that could make the Regulation an instrument of protection for everyone, regardless of their migration status. During this session, we will discuss the possibility to ban certain types of systems in the migration context, namely risk assessments systems and predictive analytics to forecast migration that increase push-backs at the EU borders, as well as the need to regulate all ‘high-risk’ AI systems that are already deployed, such as fingerprint scanners that lead to form of racial profiling and surveillance tech used to monitor borders and impede border crossings. The EU’s obsession with strengthening borders and enforcing deportation is relying more and more on the use of surveillance technology. This is why in this session we will also discuss how, and if, the AI Act can serve as an instrument to resist digital surveillance in other legislations such as the Schengen Border Code and the New Pact on Migration and Asylum, which both heavily rely on the increase in the use of surveillance technology. |

.

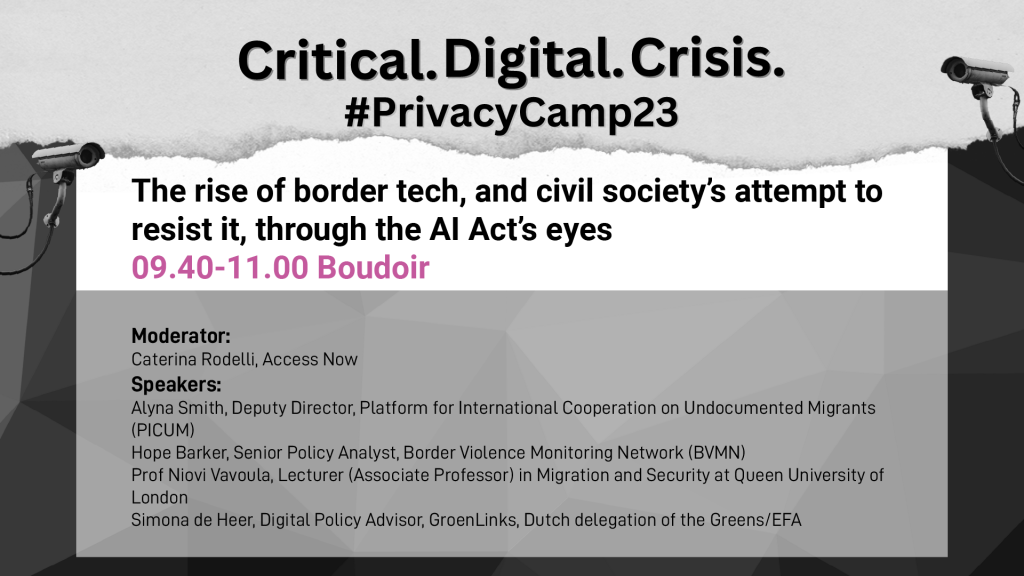

Moderator:

Caterina Rodelli, Access Now @CaterinaRodelli

Speakers:

- Alyna Smith, Deputy Director, Platform for International Cooperation on Undocumented Migrants (PICUM)

- Hope Barker, Senior Policy Analyst, Border Violence Monitoring Network (BVMN)

- Prof Niovi Vavoula, Lecturer (Associate Professor) in Migration and Security at Queen Mary University of London

- Simona de Heer, Digital Policy Advisor, GroenLinks, Dutch delegation of the Greens/EFA @SimonadeHeer